Agenta vs Fallom

Side-by-side comparison to help you choose the right product.

Agenta unifies your team's journey from scattered prompts to reliable, collaborative LLM applications.

Last updated: March 1, 2026

Fallom empowers you to optimize your AI agents with real-time observability and seamless performance tracking.

Last updated: February 28, 2026

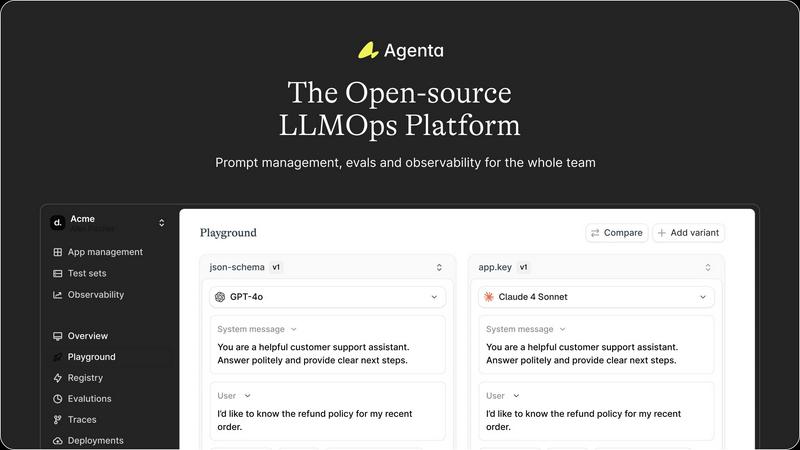

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Unified Playground & Experimentation

Agenta provides a central, model-agnostic playground where your team can safely experiment with different prompts, parameters, and models from any provider side-by-side. This eliminates the need for scattered scripts and documents. Every iteration is automatically versioned, creating a complete history of your experiments so you can track what changed, why, and its impact. Found a problematic output in production? You can instantly save it as a test case and begin debugging right in the same interface.

Systematic Evaluation Framework

Move beyond "vibe testing" with Agenta's robust evaluation system. It allows you to create a systematic process to run experiments, track results, and validate every change before deployment. The platform supports any evaluator you need—LLM-as-a-judge, custom code, or built-in metrics. Crucially, you can evaluate the full trace of an agent's reasoning, not just the final output, and seamlessly integrate human feedback from domain experts into the evaluation workflow.

Production Observability & Debugging

When your LLM app is live, Agenta gives you clear visibility. It traces every user request, allowing you to pinpoint the exact step where failures occur. You and your team can annotate these traces to discuss issues or gather user feedback directly. With a single click, any problematic trace can be turned into a test case, closing the feedback loop. Live, online evaluations monitor performance continuously to detect regressions as they happen.

Collaborative Workflow for Whole Teams

Agenta breaks down silos by providing tools for every team member. It offers a safe, no-code UI for domain experts to edit and experiment with prompts. Product managers and experts can run evaluations and compare experiments directly from the UI, while developers work via a full-featured API. This parity between UI and API creates one central hub where everyone collaborates on experiments, versions, and debugging with real data.

Fallom

Real-Time Observability

Fallom provides real-time observability for AI agents, enabling users to track every tool call and analyze timings. This feature allows engineering teams to debug with confidence and understand the performance of their AI systems through a live dashboard.

Cost Attribution

With Fallom's cost attribution feature, users can track spending per model, user, and team. This provides full transparency for budgeting and financial accountability, ensuring that organizations can effectively manage AI-related expenditures.

Compliance and Audit Trails

Fallom is built with compliance in mind, offering comprehensive audit trails that support regulatory requirements such as the EU AI Act, GDPR, and SOC 2. This feature includes input/output logging, model versioning, and user consent tracking to ensure that organizations meet their legal obligations.

Session Tracking and Grouping

The platform allows users to group traces by session, user, or customer to provide complete context. By organizing interactions in this way, teams can easily analyze user behavior and improve the overall performance of their AI agents.

Use Cases

Agenta

Streamlining Enterprise Chatbot Development

A financial services company is building a customer support chatbot. Their domain experts, compliance officers, and developers need to collaborate tightly. Using Agenta, they centralize prompt versions, run evaluations against regulatory compliance checklists and customer intent accuracy, and observe live interactions to quickly debug hallucinations or incorrect advice, ensuring a reliable and compliant final product.

Building and Tuning Complex AI Agents

A team is developing a multi-step research agent that searches the web, summarizes findings, and generates reports. Debugging is a nightmare when only the final output is wrong. With Agenta, they evaluate each intermediate step in the agent's reasoning chain, identify which tool call failed, and use the unified playground to iteratively fix the prompt for that specific step, dramatically improving the agent's reliability.

Managing Rapid Product Iteration with LLMs

A product team at a SaaS company uses LLMs to generate personalized email content. Marketing wants to test new tones, while engineers worry about stability. Agenta allows them to A/B test different prompt variations systematically, gather quantitative scores on engagement metrics and qualitative feedback from the sales team, and confidently deploy the winning variant with full version control and rollback capability.

Academic Research and Model Benchmarking

A research lab is comparing the performance of various open-source and proprietary LLMs on a new benchmark task. They use Agenta's model-agnostic playground to run the same prompt templates across all models, automate scoring using custom evaluation scripts, and maintain a rigorous, reproducible record of all experiments and results in one platform, streamlining their publication process.

Fallom

Customer Support Optimization

Organizations can use Fallom to optimize their customer support operations by gaining insights into AI interactions. This allows teams to identify common issues, improve response times, and enhance customer satisfaction through better AI performance.

Regulatory Compliance Management

With Fallom's comprehensive audit trails, companies can manage their compliance requirements effectively. This use case is particularly relevant for businesses operating in regulated industries, ensuring they adhere to necessary legal frameworks.

Debugging and Performance Tuning

Fallom enables engineering teams to debug complex AI agent workflows by visualizing timing waterfalls and tool calls. This helps in identifying bottlenecks and improving the efficiency of AI systems, leading to faster response times and better resource utilization.

Cost Management and Budgeting

By utilizing Fallom’s cost attribution feature, organizations can manage their AI-related expenditures more effectively. This use case allows for precise budgeting, cost forecasting, and financial planning, ensuring that AI investments yield maximum returns.

Overview

About Agenta

The journey of building with large language models is often a tale of chaos. Prompts are scattered across emails and Slack threads, experiments are launched on gut feeling, and debugging a failure in production feels like searching for a needle in a haystack. This is the unpredictable reality most AI teams face, where brilliant ideas get lost in siloed workflows and unreliable deployments. Agenta emerges as the guiding path through this wilderness. It is an open-source LLMOps platform designed to be the single source of truth for teams building reliable LLM applications. Agenta transforms the fragmented process into a structured, collaborative journey. It brings developers, product managers, and domain experts together into one unified workflow, allowing them to experiment with prompts, run systematic evaluations, and observe application behavior in production—all from a centralized platform. By replacing guesswork with evidence and silos with collaboration, Agenta empowers teams to iterate quickly, validate every change, and ship AI products you can truly trust.

About Fallom

Fallom is a cutting-edge AI-native observability platform designed to transform how organizations monitor and manage their customer-facing AI agents. In the complex landscape of AI operations, where even minor miscommunications can lead to customer dissatisfaction, Fallom offers a beacon of clarity. It addresses the black box problem of production AI by providing engineering teams with the tools they need to gain complete visibility into their large language model (LLM) and agent workloads. With Fallom, users can track every interaction, from prompts to model outputs, and analyze tool calls in real-time. This level of transparency not only enhances debugging and performance but also ensures compliance with industry regulations. Built for teams transitioning from experimental prototypes to full-scale deployments, Fallom empowers users to operate their AI applications with the confidence and reliability that is essential in today’s fast-paced digital landscape. The journey from uncertainty to mastery over AI operations starts here, with Fallom leading the way.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is a fully open-source platform. You can dive into the codebase on GitHub, self-host it on your own infrastructure, and contribute to its development. This ensures transparency, avoids vendor lock-in, and allows for deep customization to fit your specific LLMOps workflow and security requirements.

How does Agenta handle data privacy and security?

As an open-source platform, Agenta gives you full control over your data. You can deploy it within your private cloud or on-premise environment, ensuring that all prompts, evaluation data, and production traces never leave your network. This is crucial for enterprises in regulated industries like healthcare, finance, or legal services.

Can I use Agenta with my existing LLM framework?

Absolutely. Agenta is designed to be framework-agnostic. It seamlessly integrates with popular frameworks like LangChain and LlamaIndex, and it works with any model provider (OpenAI, Anthropic, Cohere, open-source models via Ollama, etc.). You can bring your existing applications and connect them to Agenta for the management, evaluation, and observability features.

Who on my team should use Agenta?

Agenta is built for the entire LLM application team. Developers use the API and SDK for integration, product managers and domain experts use the no-code UI to run evaluations and tweak prompts, and AI leads use the platform to oversee the entire experimentation lifecycle and production health. It bridges the gap between technical and non-technical stakeholders.

Fallom FAQ

What is Fallom?

Fallom is an AI-native observability platform that provides real-time insights into AI agent interactions, enabling engineering teams to monitor, debug, and optimize their AI operations with clarity and confidence.

How does Fallom ensure compliance with regulations?

Fallom includes comprehensive audit trails, input/output logging, model versioning, and user consent tracking, which collectively help organizations meet regulatory requirements such as GDPR and SOC 2.

Can Fallom be integrated with existing AI systems?

Yes, Fallom is built on an OpenTelemetry-native SDK, making it compatible with a wide range of AI providers. This allows users to integrate it seamlessly into their existing AI systems without vendor lock-in.

How does Fallom improve debugging for AI agents?

Fallom provides detailed visibility into every interaction, including timing waterfalls and tool call visibility. This information allows engineering teams to pinpoint issues, optimize performance, and ensure more effective AI interactions.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform, a specialized tool designed to streamline the complex journey of building and deploying large language model applications. It brings order to the often chaotic process by centralizing prompts, evaluations, and collaboration in one place. Teams often explore the landscape for alternatives driven by unique needs. This could be due to specific budget constraints, a requirement for different feature sets, or the need to integrate with an existing company tech stack. The search for the right tool is a common step in any team's evolution. When evaluating options, focus on what will best support your team's specific journey. Key considerations include the platform's ability to foster collaboration, its approach to testing and observability, and how well it integrates into your current workflow to reduce friction and accelerate development.

Fallom Alternatives

Fallom is an advanced observability platform specifically designed for AI applications. It empowers engineering teams to navigate the complexities of AI agent deployment by providing complete transparency into every interaction, from prompts to outputs. This clarity is crucial as teams transition from initial prototypes to fully operational systems where performance, reliability, and compliance become paramount. Users often seek alternatives to Fallom for several reasons, including pricing, specific feature sets, or unique platform requirements that align better with their organizational needs. When choosing an alternative, it's essential to consider factors such as the level of observability offered, ease of integration, compliance capabilities, and the ability to provide actionable insights that can enhance AI performance and user experience.