Claude Fast vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Claude Fast

Claude Fast evolves with you as your intelligent coding partner learns and grows.

Last updated: March 1, 2026

Stop guessing which AI model fits your task and let OpenMark benchmark over 100 models for you in minutes.

Last updated: March 26, 2026

Visual Comparison

Claude Fast

OpenMark AI

Feature Comparison

Claude Fast

Skill Activation Hook

Imagine never having to remind Claude of its own capabilities. The Skill Activation Hook automatically appends relevant skill recommendations to your prompts before Claude even sees them. This ensures 100% adherence to the framework's best practices. When you ask about deployment, it instantly loads the Infra Master skill. When you need UI help, the frontend specialist is activated. It means Claude Code always has the right skills loaded at the right time, eliminating the frustration of forgotten context and manual skill management.

Native Task Sync & Session Management

Your progress is sacred, and Claude Fast treats it that way. It features a bidirectional sync with Claude's native task management system, transforming high-level plans into executable, tracked tasks within your documentation. Furthermore, every conversation is autonomously saved as a session file. This means you can close your laptop, switch devices, or come back days later and pick up exactly where you left off. You never lose momentum or have to waste hours recreating session state after a restart.

Intelligent Context Routing & Min-Maxing

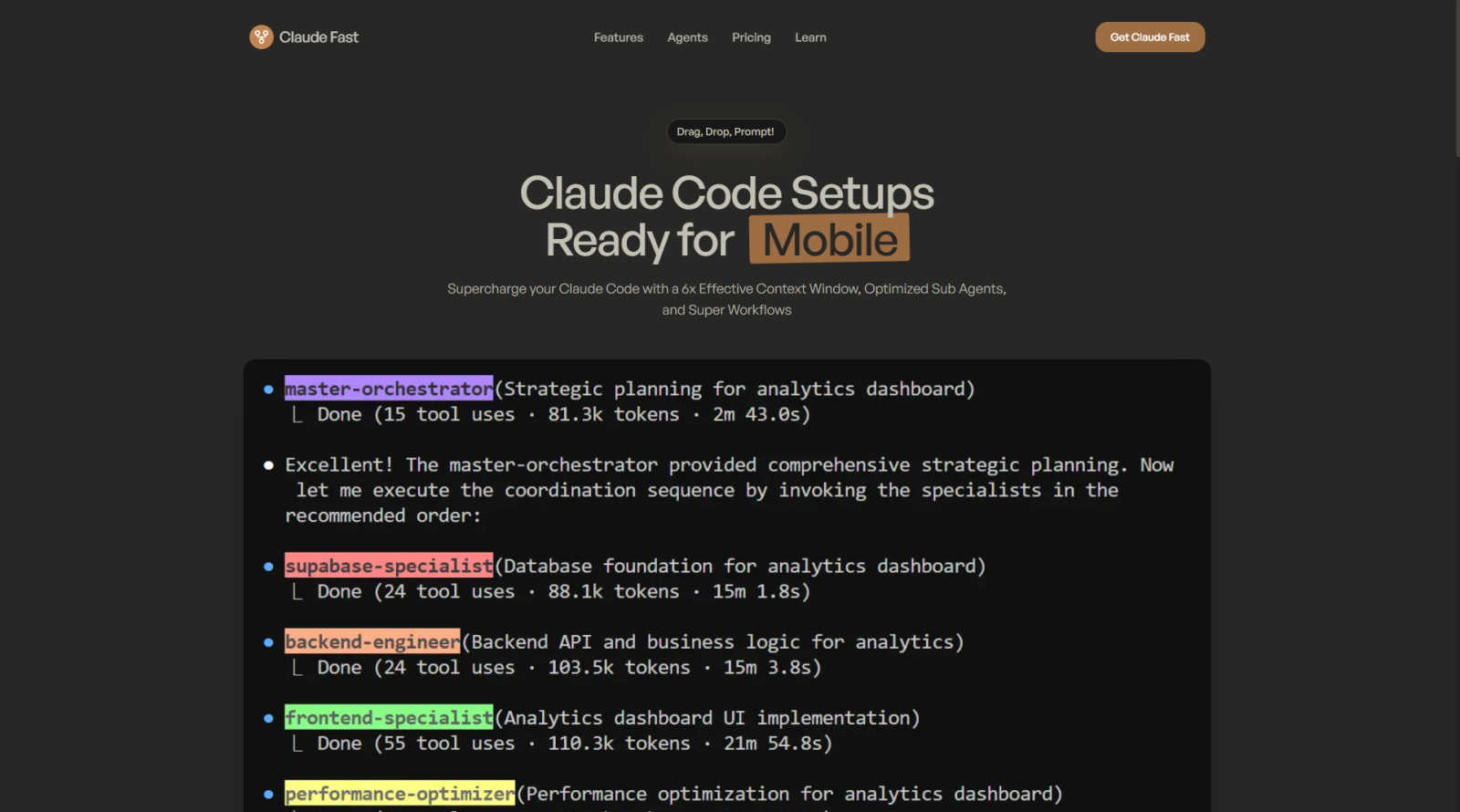

Not all tasks are created equal, and Claude Fast knows it. Simple queries are routed directly to the appropriate specialist agent for a fast, efficient response. Complex, multi-step challenges are intelligently sent to the central orchestrator for planning and delegation. This "Context Min-maxing" allows the main Claude to conserve its precious context window by delegating frequently, while sub-agents are guided to collect maximum context in their temporary windows, leading to a 6x more efficient use of context before compaction is needed.

The Infra Master Skill

Deployment and server management are no longer a weekend-consuming mystery. This powerful skill condenses expert-level infrastructure knowledge, allowing Claude to SSH into your VPS, set up, secure, and manage deployments for you. It handles the complexities of getting your application from a local build to a live, production-ready environment. This turns what was once a daunting, error-prone process into a simple conversation, empowering you to ship with confidence.

OpenMark AI

Plain Language Task Description

Forget complex configuration files or scripting. OpenMark AI lets you start your benchmarking journey by simply describing the task you want to test in everyday language. Whether it's "extract dates and product names from customer emails" or "generate three creative taglines for a new coffee brand," you define the challenge naturally. The platform then helps you structure this into a validated benchmark, removing the technical barrier to rigorous testing and letting you focus on what matters: the task itself.

Multi-Model Comparison in One Session

The core of OpenMark's power is its ability to run your exact same prompt against dozens of leading models from providers like OpenAI, Anthropic, and Google simultaneously. You don't have to run separate tests, copy outputs between tabs, or manually calculate costs. In one unified session, you get side-by-side results, allowing for a direct, apples-to-apples comparison that reveals clear winners and surprising contenders for your specific use case.

Holistic Performance Metrics

OpenMark moves beyond simple accuracy. It provides a multi-dimensional report card for each model, including scored quality for your task, the actual cost per API request, response latency, and—importantly—stability metrics from repeat runs. This last feature shows you the variance in outputs, helping you identify models that are consistently good versus those that just got lucky once, which is critical for shipping reliable features.

Hosted Benchmarking with Credits

To streamline your exploration, OpenMark operates on a credit system, eliminating the need for you to obtain, configure, and manage separate API keys for every model provider you want to test. This hosted approach means you can start benchmarking immediately, with all the complexity handled in the background. It turns a multi-day setup process into a few clicks, making sophisticated model evaluation accessible to every developer and team.

Use Cases

Claude Fast

From Solo Founder to Full Product Team

As a solopreneur, you wear every hat but can't be an expert in everything. Claude Fast acts as your instant, on-demand product team. You provide the core idea, and the framework orchestrates the specialists: a product manager to scope, a designer for UI/UX, frontend and backend developers to build, and the Infra Master to deploy. This turns your solo venture into a collaborative powerhouse, enabling you to go from a concept to a live MVP in weeks, not months.

Rapid Prototyping and Validation

You have multiple ideas but limited time to test them. Claude Fast accelerates the build-measure-learn loop dramatically. You can describe a new feature or a minimal product, and the system will rapidly generate the code, set up a basic deployment, and help you get it in front of users. This allows founders and developers to validate market fit and iterate on ideas with unprecedented speed, turning what used to be a quarterly goal into a weekly activity.

Overcoming Development Roadblocks

Every developer hits walls: a tricky bug, an unfamiliar framework, or a complex integration. Instead of spending hours in documentation and forums, you engage Claude Fast. The intelligent routing sends your specific, stuck-point problem directly to the specialist with the right skills. The context-aware system understands your entire codebase history, providing precise, actionable solutions that get you unstuck and back to productive coding in minutes.

Streamlined Application Maintenance & Scaling

Launching is just the beginning. Claude Fast continues to be an invaluable partner for post-launch life. Need to add a new feature, patch a security vulnerability, or scale your database? The session management lets you resume previous conversations about your infrastructure. The Infra Master skill can execute commands and the coordinated specialists can plan and implement changes cohesively, making ongoing maintenance and scaling a managed, systematic process rather than a crisis.

OpenMark AI

Validating a Model Before Feature Ship

A product team is weeks away from launching a new AI-powered summarization feature. They've shortlisted three models but need concrete data to make the final, responsible choice. Using OpenMark, they benchmark all three on their actual user prompts, comparing not just summary quality but also cost efficiency and consistency. The evidence guides them to the optimal model, de-risking the launch and ensuring a high-quality user experience from day one.

Cost-Efficiency Analysis for Scaling

A startup with a successful AI chatbot needs to optimize its growing inference costs. They suspect a smaller, cheaper model might perform adequately for most user queries. They use OpenMark to run their common question types against both their current premium model and several cost-effective alternatives. The side-by-side comparison of quality scores versus real API costs reveals the perfect balance, potentially saving thousands without degrading service.

Building a Reliable RAG Pipeline

A developer is constructing a Retrieval-Augmented Generation system for a knowledge base. The choice of the final LLM for synthesis is critical. They use OpenMark to test various models with complex, multi-document queries, focusing heavily on the stability metric across repeat runs. This helps them select a model that provides factual, consistent answers every time, which is far more valuable than a model that occasionally produces brilliance but often hallucinates.

Agent Routing and Orchestration Decisions

An engineering team is designing an AI agent that must route subtasks to different specialized models. They need to know which model is best for classification, which excels at data extraction, and which is most cost-effective for simple formatting. OpenMark allows them to create a suite of micro-benchmarks for each task type, building a data-driven routing map that optimizes both performance and budget across their entire agentic workflow.

Overview

About Claude Fast

You have the vision. A groundbreaking app, a life-changing feature, a business waiting to be born. You sit down with Claude Code, your most powerful ally, ready to build. But the journey quickly turns into a slog. You spend hours crafting the perfect prompt, only to get a vague response. You watch helplessly as Claude forgets the crucial context from three messages ago. You realize that to build anything real, you need a team of AI specialists—a project manager, a frontend dev, a backend architect, a deployment engineer—and you must manually orchestrate every handoff. Days vanish into debugging and prompt engineering, not creating. This was the reality, until now. Claude Fast is your shortcut. It's not a simple prompt pack; it's a complete, pre-assembled AI development framework that transforms Claude Code from a raw, powerful tool into a seamless, intelligent co-founder. Built directly on Anthropic's own best practices and constantly evolving, it's a drag-and-drop configuration featuring 11 orchestrated AI specialists. It introduces revolutionary capabilities like native task management sync and intelligent context routing, effectively multiplying your productive context window. For the founder, the solopreneur, and the developer, Claude Fast eliminates the setup friction and uncertainty. It turns what could take months of perfecting a workflow into a 30-second setup. Your journey shifts from wrestling with the tool to simply telling it what to build. You can finally move with confidence, knowing the system behind the scenes manages memory, tasks, and specialist coordination for you, from the first line of code to final deployment and beyond.

About OpenMark AI

Imagine you're building a new AI feature. You've read the spec sheets, you've seen the leaderboards, but a nagging question remains: which model is truly the best for your specific task? Not for a generic benchmark, but for the exact prompt, the precise nuance, the unique data you need to process. This is the journey OpenMark AI was built for. It's a web application that transforms the complex, technical chore of LLM benchmarking into a straightforward, narrative-driven exploration. You simply describe your task in plain language—be it classification, translation, data extraction, or RAG—and OpenMark runs the same prompts against a vast catalog of over 100 models in a single session. The magic happens when you compare the results. You see not just a single, lucky output, but a comprehensive view of scored quality, real API cost per request, latency, and, crucially, stability across repeat runs. This reveals the variance, showing you which models are consistently reliable. Built for developers and product teams making critical pre-deployment decisions, OpenMark eliminates the hassle of configuring separate API keys for every provider. With a hosted, credit-based system, you can focus on finding the model that delivers the right quality for your budget, ensuring your AI feature is built on a foundation of evidence, not guesswork.

Frequently Asked Questions

Claude Fast FAQ

How do I install and set up Claude Fast?

There is no traditional installation. Claude Fast is designed for seamless integration with Claude Code. You simply download the "Code Kit" and drag-and-drop the provided folder structure into your Claude Code project directory. Once loaded, you run a single initialization command. The entire framework, with its 11 pre-orchestrated specialists and 280+ skill files, is ready to use in under 30 seconds. You then just prompt Claude as normal, and the system activates behind the scenes.

Is Claude Fast compatible with the latest Claude Code updates?

Absolutely. The team behind Claude Fast is committed to constant evolution. The framework is built on Anthropic's official recommendations and is updated with every major Claude Code release, such as the recent update for the new Agent Teams feature in Code Kit v5.0. Purchasing Claude Fast grants you full lifetime access to all future updates, ensuring your development framework never becomes obsolete.

What's the difference between the Code Kit and the Growth Kit?

The Code Kit is your complete AI development framework, focused on building, debugging, and deploying software products. The Growth Kit is a separate but complementary framework tailored for sales, marketing, and research tasks—like generating SEO content, marketing copy, and conducting market analysis. The Complete Kit bundles both at a significant discount, providing an end-to-end solution to take you from your first commit to your first customer.

Do I need to be an expert developer to use Claude Fast?

Not at all. Claude Fast is designed to empower individuals at all skill levels. For beginners, it provides a guided, best-practice structure that teaches effective patterns as you build. For expert developers, it eliminates the boilerplate setup and cognitive overhead of managing AI agents, allowing them to focus on high-level architecture and complex problems. It meets you where you are and amplifies your ability to ship.

OpenMark AI FAQ

How does OpenMark ensure results are accurate and not cached?

OpenMark AI performs real, live API calls to each model provider during every benchmark run. The costs, latencies, and outputs you see are generated on-demand for your specific task. This guarantees you are comparing genuine, current performance data—the same experience you would have integrating the model directly—and not reviewing static, pre-computed marketing numbers that may not reflect real-world conditions.

What kind of tasks can I benchmark with OpenMark?

The platform is designed for a wide array of common and complex AI tasks. You can benchmark models for classification, translation, data extraction, question answering, research synthesis, image analysis, RAG (Retrieval-Augmented Generation) responses, agent routing logic, creative writing, and much more. If you can describe it in a prompt, you can likely build a benchmark for it.

Do I need my own API keys to use OpenMark?

No, one of the key conveniences of OpenMark is that it is a hosted benchmarking service. You operate using credits purchased or obtained through a plan. The platform manages all the underlying API connections to providers like OpenAI, Anthropic, and Google. This means you can start comparing models immediately without the administrative overhead of securing and configuring multiple keys.

Why is measuring stability or variance important?

A single test run can be misleading, as even the best models can occasionally produce a poor output, and weaker models can sometimes get lucky. By running your task multiple times and measuring variance, OpenMark shows you which models are consistently reliable. For shipping a production feature, consistency is often more critical than peak performance, as it builds user trust and ensures a predictable experience.

Alternatives

Claude Fast Alternatives

Claude Fast is a specialized AI development framework designed to transform Claude Code into a seamless, intelligent partner for building software. It belongs to the category of AI coding assistants, but it stands apart by offering a complete, pre-configured system of orchestrated specialists that manage memory, tasks, and context automatically. Users often explore alternatives for various reasons. Some may seek different pricing models or need a tool that integrates with a specific development platform. Others might prioritize a different feature set, such as support for other AI models or a simpler, less structured approach to AI assistance. The search is a natural part of finding the right tool for one's unique workflow and budget. When evaluating options, consider the core promise of a frictionless journey. Look for solutions that genuinely reduce setup time and cognitive load, not just add complexity. The ideal alternative should offer robust context management, clear task coordination, and intelligent routing that keeps you in a state of creative flow, turning your vision into code with minimal interruption.

OpenMark AI Alternatives

Choosing the right LLM for your project is a critical, often frustrating, step. OpenMark AI is a developer tool designed to cut through that uncertainty by letting you benchmark over 100 models on your specific task, comparing real-world cost, speed, quality, and output stability in a single browser session. Developers and teams often explore alternatives for various reasons. Perhaps they need a solution that integrates directly into their CI/CD pipeline, requires a self-hosted option for data governance, or operates on a different pricing model. The needs of a solo builder differ from those of an enterprise team. When evaluating other tools in this space, focus on what matters for your workflow. Key considerations include whether the tool tests with live API calls or cached data, how it measures and scores output quality for your use case, its model catalog coverage, and how it handles the practicalities of API keys and cost transparency across providers.